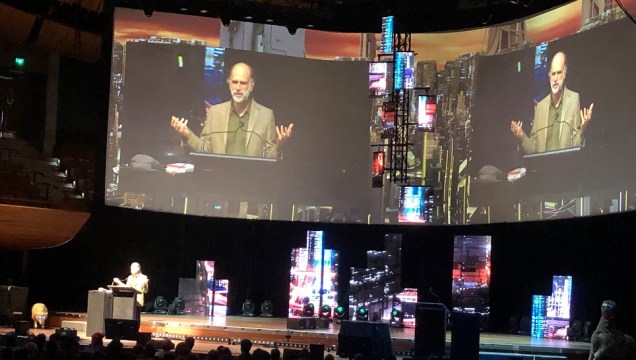

I recently attended the fantastic Kiwicon 2038 InfoSec conference (shout out to the crew and speakers – you guys rock!) in Wellington, New Zealand. An eclectic cast of speakers, including Bruce Schneier, Kelly Ann from Slack and many others, delivered thought-provoking talks accompanied by ‘flame effects’[1].

We’re all doomed…

Bruce Schneier, a celebrity in the world of InfoSec, painted a grim picture of the future of security, to the point that part way through the talk he had me convinced that we’re all doomed…

Only at Kiwicon: Bruce Schneier prophesising doom (and plugging his new book[2]), while a sheep wearing neon sunglasses looks on …

Bruce pointed out that everything has a computer in it (well, most things. I for one don’t – yet…), but no one wants to pay for good secure software, and increasing complexity is outpacing improvements in security. Added to that, attackers are evolving, so defence is an arms race just to keep up. But it gets worse – it’s no longer just about privacy breaches – security flaws are now killing people and damaging property… Cybergeddon[3] is coming, we’re all doomed!!!

That got me thinking…

Or are we? To understand this more we need to start by winding the clock back to see where we’ve come from …

In the Beginning…

Once upon a time, InfoSec was a chivalrous pursuit, a noble fight between good and evil, defined by fortifications against seldom-seen marauders[4]. Over time, the defences grew better (firewalls! spam filters! self-defending networks‽ patching!!!) and the kingdoms looked like they were set to become peaceful havens.

…and Now

Somewhere between then and now things fundamentally changed. Complexity increased significantly (computers in everything), and the lines between good and evil have blurred to the point where they are becoming increasingly meaningless. Are governments good or evil? What about big tech companies hoovering your data to sell to questionable parties?

Whereas in the halcyon days of the past, good guys (aka the IT team) held back the infrequent advances of the enemy (usually a pimply-faced youth hacker), we now live in a world where the adversaries are often well organised, highly skilled, and very hard to trace. There are dark markets where malware and hacking services are bought and sold. The world of InfoSec has descended into a murky swampland, where warlords and factions fight for unclear goals, with often changing allegiances. Even those on the same side inflict unintended wounds on each other (friendly fire? more on this later).

Defence in an Era of InfoSec Chaos?

In this post-good-vs-evil world, how does the ‘blue team’ continue to defend their patch, and how can we save the world from cybergeddon? Far be it for me, an InfoSec outsider, to answer these questions. I do however have a perspective as an IT architect – perhaps taking a systems view of InfoSec may help?

Systems View of InfoSec

When we consider InfoSec as many interconnected systems, we start to see the complexity of the whole, the different trends, as well as the tendency over time to converge toward equilibrium. This view can be useful, as it takes us away from obsessing on small details[5].

Complex Systems and Emergent Properties

From a global perspective, InfoSec can be viewed as a complex system comprising 7 billion humans and 20 billion connected devices[6] that has emergent properties. For example, a blue team cannot know the exact behaviour and motivations of every cyber-criminal or hacked device globally; however they can understand emergent properties of the cyber-criminal system, such as motivation for financial gain, use of types of hacking techniques and overall statistical frequency of attack.

Another feature of complex systems is the results of changes are difficult to predict, and may come with unintended consequences. This becomes a feedback loop that discourages large changes. For instance, when Russia (allegedly) used NotPetya against Ukraine[7] in 2017 it caused considerable collateral damage, including to Russian companies (friendly fire?[8]). The attack itself was built on software developed by the NSA for the USA, and later stolen. Complicated huh?

This brings us to another disincentive to launching cyber-attacks. The weapon in a cyber war is not the same as a physical weapon. The code is given to the enemy in an attack, and even with the best obfuscation, it is still likely to be reverse engineered back to some form of source code the adversary can use. Worse still, the critical infrastructure of the attacker is likely to include systems from the same companies as their victim (it’s a global market), so they are vulnerable to counter-attack.

The risk of reprisal and unintended consequences from cyber-attacks is a useful feedback loop in the context of the whole system; it provides a disincentive to launching attacks, broadly comparable to the nuclear détente resulting from mutually assured destruction.

Understanding Motivations

Within a system there are actors, and they have their own motivations. Understanding motivations, often driven by incentives, can be enlightening. Here are some examples:

Security vendors (who sell security products) are motivated by sales targets, not by providing security benefits to their customers (except where it drives more sales), and often their R&D budgets are severely constrained. Contrast this to cloud service providers and other big tech companies. Their motivations are also sales driven, however they both understand the impact of security vulnerabilities to their sales (often their customers consume their services month-to-month or pay-as-you-go, and can easily leave) and leverage their scale to build security into their services, They are thus arguably more motivated and capable at security than bespoke security vendors.

Likewise, motivations in Information Security teams are worth discussing. They are generally motivated by protecting their company from security compromise, but seldom motivated by usability, shipping feature enhancements or other advantages for the company as a whole. This can lead to perverse incentives, where security products and policy restrictions are installed that reduce the competitiveness of the company[9] to fulfil the goals of the InfoSec team. These problems are hard to solve in isolation, but become clearer when viewing as a larger system.

Reduce Complexity

Another part of the solution has to be reducing system complexity. The number of computers in the world is rising rapidly, driven by changes such as Internet of Things (IoT) – computers without a person to tend to them, such as lifts, lightbulbs and even fish tanks[10]. This scale doesn’t have to drive complexity; the technology can be built on a small set of common reasonably secure platforms, however that is not the case today – there are huge variations in software and hardware. While a monoculture would be bad, settling to a smaller set of common ways to build systems would be beneficial to the whole system. Moves are already afoot to improve this, such as IoT solutions from Google, Microsoft and Amazon.

Coding as a System

It is tempting to look at the rough correlation between the number of lines of code written and the number of security exploits[11] and set incentives to reduce the total codebase, however this can drive a perverse and counterproductive incentive to write more code on each line.

A better approach may be to see coding as a system where we need to build in better system checks and feedback loops to improve the security of the resulting code. We’re still using languages such as C that are not memory-safe, however over time we’re learning lessons and writing languages that are better at protecting us feeble humans from coding unintended vulnerabilities.

Consider Defensive Trade-Offs and Avoid ‘Friendly Fire’

Almost every InfoSec defence comes with some form of trade-off, some of which are painful to the point of being considered ‘friendly fire’.

Take for instance the password policies in organisations. They often have trade-offs that are not considered in context of the wider system. Enforcing strong passwords that need to be regularly changed would on the face of it seem to improve security, however unintended consequences include users writing their passwords down on sticky notes, and more calls to the password reset line[12]. (see obligatory xkcd)

Other trade-offs can include security software agents that consume resources impacting system performance, and extra authentication challenges that slow down or prevent people getting to where they need to go. They’re not necessarily bad things, but the trade-offs must be considered and agreed to by the right stakeholders.

Sometimes InfoSec goals can even be in conflict with each other, and things get really murky. For example, tools for data loss prevention, scanning downloads for malware etc. can conflict with secure encryption when they have to man-in-the-middle (MiTM) traffic to see the decrypted stream. This has been shown to reduce the effectiveness of encryption[13].

Moral of this story: carefully consider trade-offs and impact to the wider system before deploying security protections.

Behavioural Economics View

Bruce, in his aforementioned Kiwicon talk postulated that the market doesn’t reward security. Pure capitalism drives perverse incentives (think Ford Pinto Memo[14]), and regulation is required to ensure good security outcomes otherwise companies may see data breaches as less expensive than implementing proper security. Governments are thus part of the overall InfoSec system and need to play their part by regulating, where required, to protect consumers.

Cloud to the Rescue…

There is a big change underway in the economic model of tech that gives me hope that we can return to a more secure landscape, emerging from the dank swamp of unpatched vulnerabilities and data breaches, back into the light meadows of safe secure computing filled with unicorns and rainbows, and that change is public cloud[15]. Globally, only a handful of mega-tech companies have the capital and scale to build data centres and platforms needed to be proper cloud players. From a systems perspective, this is a good thing as it reduces complexity through fewer variants, and also quality should be higher as the players have sufficient scale to resource security properly. Is it perfect? Hell no (misconfigured S3 buckets anyone?). But it’s better than thousands of half-baked implementations, many of which have exploitable vulnerabilities through poorly written software and/or lack of patching. The counterpoint to this is we need to carefully consider how we regulate this small set of global mega-tech companies so they don’t abuse the market, and also consider how to minimise the fallout should one of them get owned or otherwise fail…

Wrapping Up – We’re Not Completely Doomed After All…

When considering InfoSec as a system, I’ve convinced myself that we’re far from doomed; disincentives make ‘cybergeddon’ unlikely to transpire[16]. Also the tech industry is consolidating and this is bringing better security through simplification and economies of scale. It’s also easy to lose sight of the benefits of technology when you’re immersed in the murky world of InfoSec; overall tech has had a massive benefit to society.

We need to stop viewing things in isolation and consider their wider impact on the system. This is complex and there are often unintended consequences to deal with. InfoSec is no longer a fight of good vs evil where vendor security products will save the day. It is engineering, there are trade-offs and there is no perfect state. We need to sustainably tune the defences to maintain the whole, much as our biological immune system does for us[17].

As Bruce pointed out, we also need sensible government and international policies and laws that regulate InfoSec to protect individual rights and punish those individuals, companies, criminal organisations and nations that violate them. This is a difficult problem to solve, and we’re unlikely to ever fully fix it. But improving multilateral global agreements will go a long way to better setting incentives for companies and individuals.

I enjoyed my time at Kiwicon mingling with the black hoodie-clad masses, and I am immensely glad for the work that the InfoSec community does. I now return to the world of IT architecture, with its own set of challenges, and consider how it also benefits from taking a systems view.

Footnotes:

[1] Pyrotechnics by another name

[2] Bruce’s book: https://www.schneier.com/books/click_here/ . One day I may read it

[3] I use the term ‘cybergeddon‘ in a humorous manner, in case that isn’t clear

[4] Is my memory of this time through rose-tinted glasses?

[5] Although it is still perfectly valid, and required, for some people to obsess about these details.

[6] I have no idea if these numbers are correct, but neither does anyone else, so they’ll do to illustrate the point.

[7] Great write-up from Wired at https://www.wired.com/story/notpetya-cyberattack-ukraine-russia-code-crashed-the-world/

[8] Yes, I know it’s a horrible oxymoron

[9] Never forget usability. Users hate friction and will work around controls where they can.

[10] Bruce Schneier made the fish tank reference in his talk, see: https://www.businessinsider.com.au/hackers-stole-a-casinos-database-through-a-thermometer-in-the-lobby-fish-tank-2018-4 for the back story

[11] For an old discussion on exploits per lines of code by language and app see: https://security.stackexchange.com/questions/21137/average-number-of-exploitable-bugs-per-thousand-lines-of-code

[12] You should consider multi-factor authentication (MFA)…

[13] See: https://insights.sei.cmu.edu/cert/2015/03/the-risks-of-ssl-inspection.html

[14] See: https://en.wikipedia.org/wiki/Ford_Pinto#Cost-benefit_analysis,_the_Pinto_Memo

[15] Yes, I know public cloud isn’t actually public. It’s just a name, okay?

[16] Although this does assume rational actors. So, maybe I’m wrong…

[17] But without killing us along the way, as extreme allergic reactions can do