One of my 2018 learning goals is to become more familiar with containers. This blog post (first of a series) is intended to help broaden my own understanding of containers; hopefully it is also of use to others.

What is a Container?

A container is a type of application virtualisation that isolates and sandboxes application processes within an operating system (OS).

On Linux, a container is a process (or multiple), isolated from other processes through namespaces. The container sees a separate isolated filesystem:

Overview of how a container runs on Linux

Containers are a type of operating-system-level virtualisation. Like a physical container, you can move them somewhere else (as long the location supports containers), and the app will run, as the container is self-contained and has standard underpinnings.

No, not one of these… but there are some parallels

Building on the physical container metaphor, just as you can load many shipping containers on a container ship, you can run many instances of containers on the same container host.

Containers start their life as an image, a package of application code, libraries and other dependencies, environment variables and config files. Think of an image as a recipe that can be used to make containers. When an image is brought up on a container runtime it is now called a container.

How images turn into containers

How Containers Compare to Other Ways to Run Apps

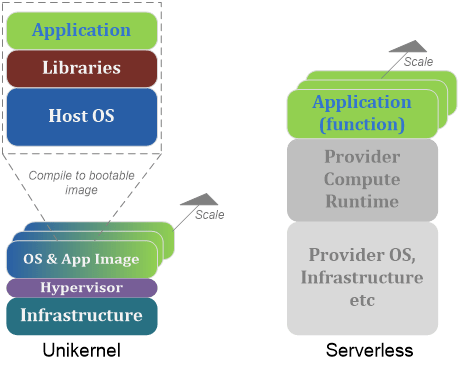

Containers offer a convenient way to horizontally scale applications. There are also alternative virtualisation technologies, including Virtual Machines (VMs), Unikernel and Serverless, as per diagrams below.

The simplest way to run an app is to use a dedicated server. While this has the advantage of simplicity, it compromises portability and is inefficient use of server resource due to having to provision for peak capacity, and thus having long periods where CPU/Memory may be sitting idle.

Scaling apps using dedicated servers or VMs

Virtual Machines (VMs) introduce a hypervisor layer pretending to be a bare metal server to guest operating systems that it hosts. It thus becomes possible to run multiple guest OS on a host server and an application can be scaled this way. VMs make more efficient use of underlying resources, and improve application portability by allowing use of VM images; however they are not a particularly efficient method for deploying smaller applications, as each image has a complete copy of all OS code and this uses extra resources to run.

Containers can be run on a host OS or combined with VMs and run in a guest OS. They use a container runtime to host the containers.

Scaling apps with containers

Unikernel is a new concept where the application, dependencies and OS are compiled into a specialised minimalistic bootable image. These can be scaled by running multiple images across hypervisor(s).

Scaling apps with Unikernel or Serverless

Serverless is a concept where the application is stripped back to just code (a function) and the application runtime and OS are provided as a ‘black box’ service. Scaling thus becomes the responsibility of the service provider.

When to Consider Using Containers?

Consider using containers if you need some or all of these properties:

- Portability. Containers make it easier to break dependencies between applications and infrastructure, meaning the application can be run in more places, and the infrastructure can be updated with less concern about breaking the application. Horror stories abound in IT of strange applications (sometimes without source code) running on ancient unsupported hardware. Often this is because the app is tied to the hardware version and cannot be moved. Avoid this situation like the plague! A container image has all application dependencies packaged, so can run anywhere the container underpinnings exist. There is a proviso to this – container portability does not guarantee compatibility – the container must still be compatible with the underlying operating system (see next point).

- Compatibility. Containers do not promise to run on any infrastructure that has a container runtime. Compatibility is still required with the underlying OS[1]. This compatibility can be abstracted by leveraging image layers (more on this in a future post).

- Efficiency. Containers allow more application workload to run on the same amount of infrastructure (compute, storage, memory), when compared to VMs[2]. They also start significantly faster[3].

- Agility. Containers make it faster to deploy applications and services. There are patterns and tools that integrate containers into DevOps CI/CD development pipelines[4]. Container images can be also be version controlled making it easy to control releases.

- Scalability. Containers can easily be scaled up, as many containers can be deployed on a single host, and they can be scaled horizontally to multiple hosts without concern for dependencies.

- Application Isolation. Containers make it possible to isolate (sandbox) the application from other processes on the same host, and to make a limited form of resource guarantees.

Additionally, containers are a good basis for deploying microservices architecture, as they are a convenient method for building small modular applications.

Wrapping it up

I hope this post has been of use to you. If you have any corrections or other ideas then get in touch via the comments.

In the next blog post we cover what Docker is, how to be a container farmer, and why you may want to build a containerised application-layer SDN.

[1] See: https://www.redhat.com/cms/managed-files/li-container-image-host-guide-tech-detail-f8326kc-201708-en.pdf

[2] 4-6 times improvement according to http://www.zdnet.com/article/what-is-docker-and-why-is-it-so-darn-popular/

[3] https://cloud.google.com/containers/

[4] For example, see: https://goto.docker.com/rs/929-FJL-178/images/docker-hub_0.pdf

2 thoughts on “Containers Part 1 – What are Containers?”